Generative Muscle Stimulation: Providing Users with Physical Assistance by Constraining Multimodal-AI with Embodied Knowledge

Abstract

Electrical-muscle-stimulation (EMS) can support physical-assistance (e.g., shaking a spray-can before painting). However, EMS-assistance is highly-specialized because it is (1) fixed (e.g., one program for shaking spray-cans, another for opening windows); and (2) non-contextual (e.g., a spray-can for cooking dispenses cooking-oil, not paint—shaking it is unnecessary). Instead, we explore a different approach where muscle-stimulation instructions are generated considering the user's context (e.g., pose, location, surroundings). The resulting system is more general—enabling unprecedented EMS-interactions (e.g., opening a pill-bottle) yet also replicating existing systems (e.g., Affordance++) without task-specific programming. It uses computer-vision/large-language-models to generate EMS-instructions, constraining these to a muscle-stimulation knowledge-base & joint-limits. In our user-study, we found participants successfully completed physical-tasks while guided by generative-EMS, even when EMS-instructions were (purposely) erroneous. Participants understood generated-gestures and, even during forced-errors, understood partial-instructions, identified errors, and re-prompted the system. We believe our concept marks a shift toward more general-purpose EMS-interfaces.

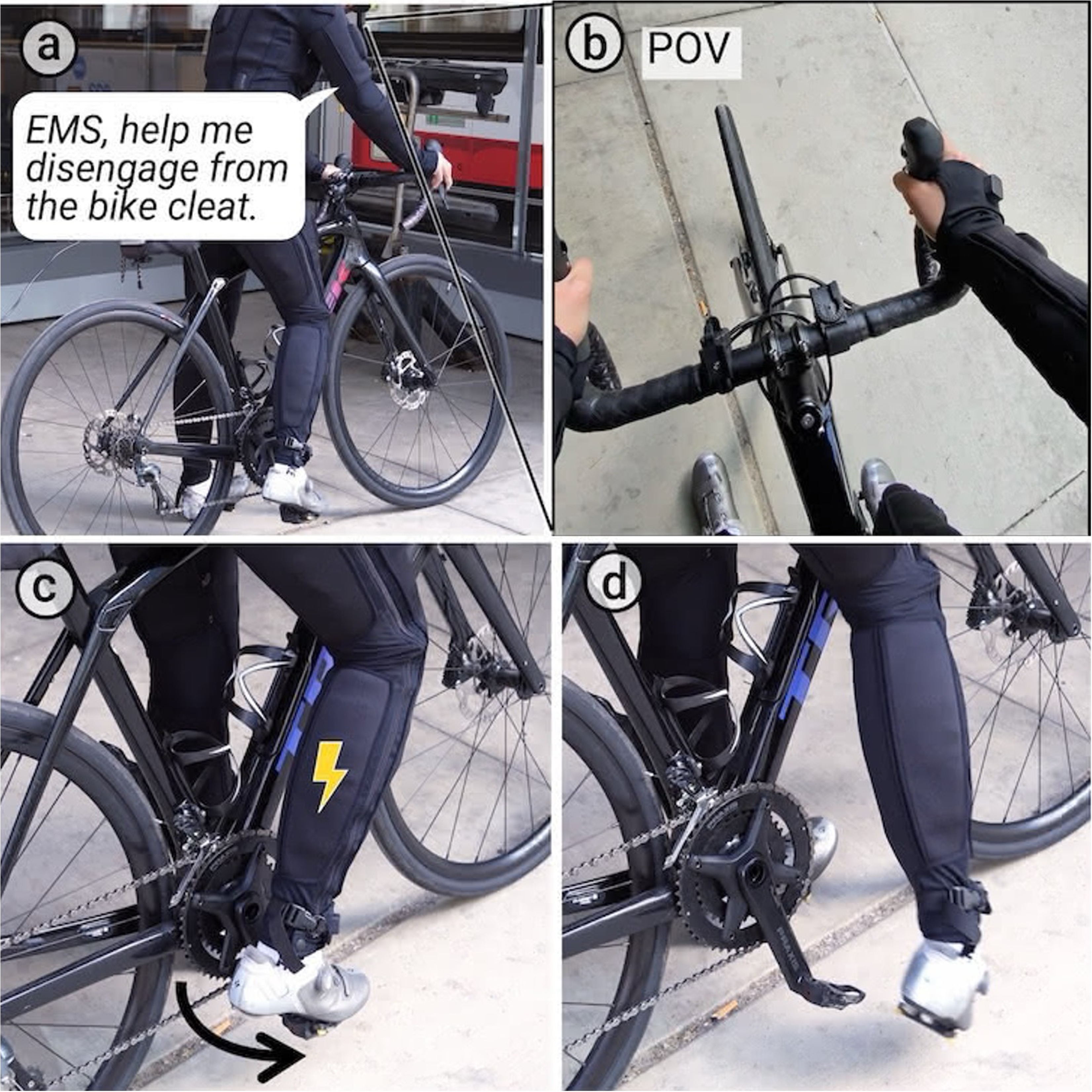

Demonstration of Our System

How it Works

Unlike traditional EMS systems that use fixed code for specific tasks, our system uses Multimodal-AI reasoning to generate appropriate muscle instructions based on the context. By combining visual data from camera glasses with contextual clues (like the user's location), our system generates textual instructions that are then translated into specific muscle stimulation patterns. To prevent the AI from requesting physically impossible movements, we implement a constraint layer that respects human joint limits and muscle stimulation knowledge. Note that there are other ways to implement such systems (e.g., using a single end-to-end model, or using a different constraint mechanism). We chose this architecture to demonstrate the core concept of Embodied-AI through muscle stimulation, which is not tied to this specific implementation. Please refer to our full paper for more details on the system architecture and implementation.

Physical Assistance

System Evaluation

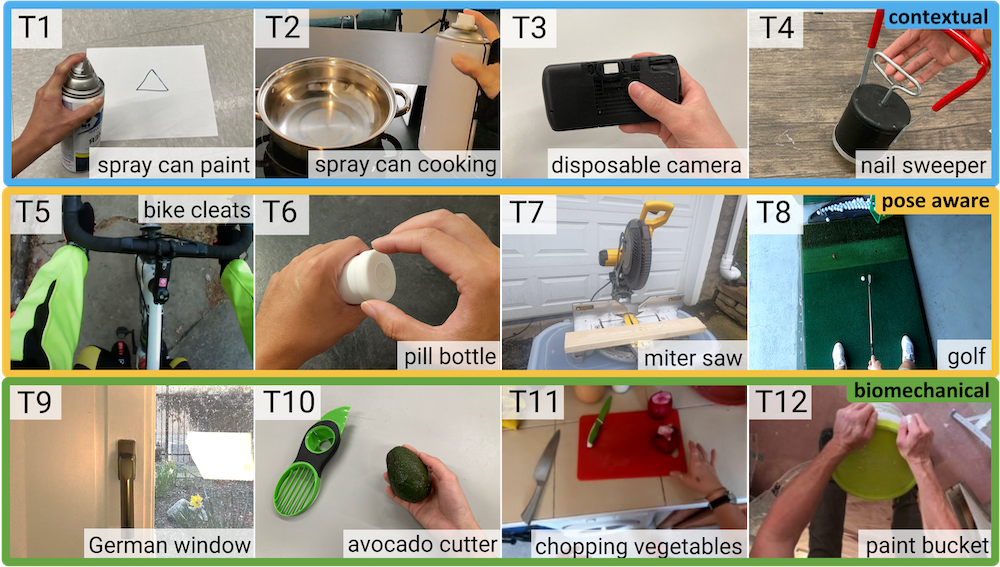

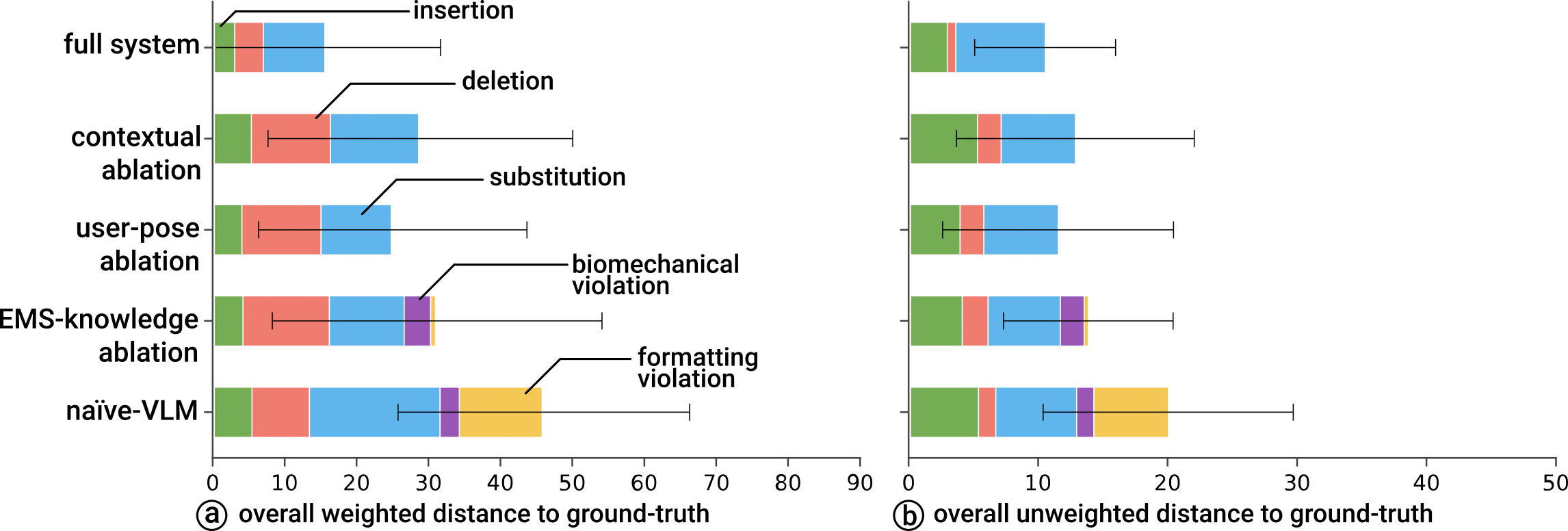

We evaluated our system through an ablation study across 12 physical tasks, benchmarking our output against expert-authored ground truth instructions. Refer to our full paper for more details about the ablation study.

We found that each module of our system provides a net-positive contribution: (1) absence of contextual-cues led to movement-errors, (2) absence of pose-information generated movements that did not respect the current body-pose; and, finally, (3) absence of EMS-knowledge leads to incorrect EMS-instructions, sometimes even violating physical human constraints (i.e., unergonomic).

User Study

We evaluated our system with 12 participants across six physical tasks. The study was designed to examine not only what happens when our system generates the correct instructions, but especially, what happens when the generated instructions are erroneous, such as incorrect sequence, wrong limb, or nonsensical movement. We observed that participants were generally able to recover by leveraging system features (e.g., repeating movements, slowing down playback, re-prompting, etc.) to successfully complete their tasks. Refer to our full paper for more details about the user study.

The Team

This research is from the Human-Computer Integration Lab at the University of Chicago:

BibTeX

@inproceedings{ho_generative_2026,

address = {Barcelona, Spain},

series = {{CHI}'26},

title = {Generative {Muscle} {Stimulation}: {Providing} {Users} with {Physical} {Assistance} by {Constraining} {Multimodal}-{AI} with {Embodied} {Knowledge}},

doi = {10.1145/3772318.3790817},

booktitle = {Proceedings of the {CHI} {Conference} on {Human} {Factors} in {Computing} {Systems}},

publisher = {Association for Computing Machinery},

author = {Ho, Yun and Nith, Romain and Jian, Peili and He, Steven and Felalaga, Bruno and Teng, Shan-Yuan and Seeralan, Rhea and Lopes, Pedro},

month = apr,

year = {2026},

pages = {1--22},

}